Tech Topic | July 2020 Hearing Review

By Erik Harry Høydal, MSc; Rosa-Linde Fischer, PhD; Vera Wolf, Dipl-Ing; Eric Branda, AuD, PhD, and Marc Aubreville, PhD

Results of this study show the Signia Assistant smartphone app ensures the end-user safely gets the best possible solution for any given situation, marking an important step in going from assumption-based to data-driven knowledge—and moving away from a “one-size-fits-all approach” to precisely tuned hearing for each individual via artificial intelligence.

It is well recognized that the starting point for determining appropriate frequency-specific gain and output for a hearing aid wearer is the use of a validated prescriptive fitting approach. It is also well known that, for most wearers, fine-tuning is necessary—either on the day of the fitting and/or following real-world listening experiences. This can be due to user variations in preferred loudness levels, preferences for sound quality, listening in noise situations, own-voice issues, and many other factors. Post-fitting adjustments, therefore, are an established component of the hearing aid fitting process.

The ability to adjust frequency response and hearing aid parameters evolved with technology as we moved from the basic analog BTE designs of the 1950s, to screwdriver-adjusted trimpot/potentiometers in the late ’50-’80s, to the much more precise fittings afforded by digitally programmable hearing aids of the 1990s, and finally into the fully digital age in which individualized fittings and features can be maximized. Along with the increased ability for the hearing care professional (HCP) to make precise adjustments across different hearing aid parameters came the increased responsibility to “get it right”—the expectation of optimization from hearing aid wearers became greater once they learned that adjustments easily could be made during post-fitting visits. The adjustment solution, however, to a random user complaint is not always straightforward. When the HCP hears from the wearer that “Things are just too loud,” does he or she change overall gain, the AGCi kneepoints, or the AGCo kneepoints? And at what frequencies?

To provide some organization to this new world of hearing aid adjustability, Jenstad et al1 conducted a survey of clinical audiologists and developed a vocabulary of 40 different terms that patients use to describe their fine-tuning needs. In a follow-up survey, the authors asked 24 “expert” audiologists to describe their method of adjusting the hearing aid fitting when the patient’s complaint was one of the frequently reported terms. There was a high degree of agreement among the experts, providing a starting point for an expert system for fine-tuning hearing aid fittings. Based in part on these findings, Siemens developed a Fitting Assistant as part of the Connexx fitting software. Changes to the programming were suggested in the software based on the wearer’s complaints, which then could be implemented by the HCP if desired.

While today, on a post-fitting visit, the HCP has the ability to make thousands of different changes to many different hearing aid parameters, and expert trouble-shooting guides are available, questions remain regarding the efficiency and validity of this time-honored approach.

1) Access and convenience. Traditionally, to obtain post-fitting adjustments to the fitting, the user must return to the HCPs clinic or office. This is at best a nuisance, and for some individuals, a considerable hardship—especially when physical appointments are practically impossible (eg, diseases, disabilities, and unavailable transportation). Additionally, return visits may be simply not possible in a timely manner for unanticipated reasons. We recognize that post-fitting visits, especially for a new user, can be very helpful regarding assistance with hearing aid use and handling, and providing additional informational counseling. However, continued visits for fitting changes have been shown to reduce hearing aid satisfaction.2 Moreover, there are a portion of patients who will not go through the trouble of returning for adjustments, and simply will stop using the hearing aids, or if recently purchased, return them for credit.

To some extent, this issue can be solved with remote programming solutions, such as Signia TeleCare,3,4 although this still requires communication with the HCP.

2) Defining the hearing/listening problem. An issue that impacts the validity of the post-fitting changes is that the fine-tuning is based on the patient’s memory of a given listening situation. It is simply not possible to remember how something sounded days or weeks ago. For example, let’s say the user complaint is understanding a talker in background noise. The appropriate change in the fitting will vary based on the overall SPL of the listening environment, the signal-to-noise ratio, and the hearing aid settings at the time of the listening experience. What often happens, is that the HCP hears a vague story about a listening experience, and then tries to fix the problem in a quiet non-representative environment.

These different listening situations are difficult to simulate in a research laboratory, and nearly impossible in the fitting office. In most cases, the HCP is left guessing what the actual listening situation might have been. Guessing wrong means that the wearer will be back for another change soon—or will simply give up.

3) Complexity of parameter adjustments. As we’ve mentioned, the key to post-fitting fine-tuning is not only knowing the specific problem, but also knowing what change in the fitting software has the greatest probability of providing the solution. This is especially problematic for inexperienced HCPs, who tend to rely on the manufacturer’s default settings. Additionally, while some recent data are available,5 consider that many of the “established” adjustments for a given problem are based on the data from the Jenstad et al1 survey conducted 20 years ago—a time when analog products were still common and many digital products only had 2-4 channels.

Fine-tuning modern hearing aids is a different process. Regardless of how skilled you are, it is difficult to be spot on, without precise information.

Fine-Tuning 2020

As we reviewed above, there are several limitations to the fine-tuning process that we have been familiar with for the past 20 years. Fortunately, solutions are now available. In recent years, researchers have shown the advantage of what is termed Ecological Momentary Assessment (EMA) to evaluate real-world hearing aid performance. As has been reported in publications describing TeleCare,3,4 it is now possible, through the use of smart phone apps, to utilize EMA in routine clinical practice. When in a given listening situation, the wearer opens the app, the hearing aid retrieves acoustic information and the hearing aid settings at that time. It is no longer necessary for the wearer to “remember” the conditions when a given problem occurs.

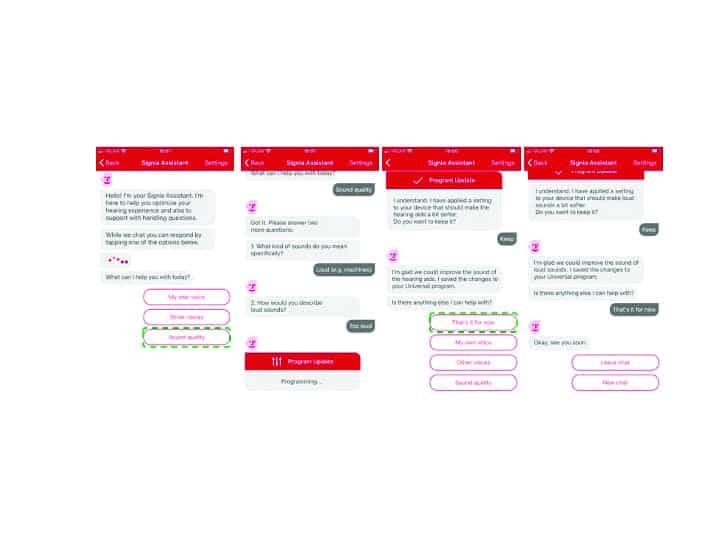

The next step is then to guide the wearer to provide specific information regarding the problem, then accordingly, an automatic programming change is made. The wearer then notes if the problem is solved. Figure 1 demonstrates how a user in a real-world setting can take out a smart phone, open the Signia Assistant, and start a conversation that ends in an appropriate hearing solution. What follows is a description of how this process works.

Fine-Tuning and the Use of AI and Neural Networks

We mentioned earlier that, with modern hearing aids, there are many adjustments possible, with many features interacting with other features. This is recognized by the Signia Assistant by using artificial intelligence (AI) to learn the customer’s individual preferences in the given situation.6 Moreover, in general, it adapts its built-in knowledge about what solutions work best for several customers.

Although AI is used as a buzz-word in many fields, the advancements of AI truly shine when we look at Artificial Neural Networks, strongly inspired by how the human brain works. Our brain is composed by a vast number of cells called neurons. Our ability to learn comes from the fact that these neurons can communicate between each other and create new pathways, enabling us to gain new skills or learn new languages for example.

While previous machine learning approaches were often created once, and aimed to replicate a certain behavior, given to it in the form of examples, never to be modified later, current approaches are much more dynamic. Besides the clearly individual hearing loss, most hearing aid initial (first-fit) settings are tuned to match a group. While, on average, this makes those fixed values a good choice, individually there can be strong differences. Data-driven approaches recognize there might be more knowledge than we can currently grasp. And neural network approaches can be quickly adapted to reflect newly acquired knowledge, even without the need to describe those relations verbally.

Signia Assistant at Work

In the vast majority of situations, the universal hearing program is used by hearing aid wearers — consequentially, fine-tuning by the expert will also often focus on this hearing program. This motivates why the Signia Assistant also provides modifications for this program: to ensure the patient benefits from fine-tuning in a high number of daily situations. All settings touched are part of adaptive features—be it Own Voice Processing (OVP), directionality and noise reduction, or compression. As a result, this combines a situation-awareness that is already built into these algorithms, with seamless transitions for an individualized but stable sound perception.

Let’s say a wearer tells the Assistant that he or she would like to hear their conversation partner better. A possible solution for this situation could include changes in gain, compression, or also directionality and noise reduction. The best solution, however, is highly individual; it might depend on the individual’s hearing loss, cognitive capabilities, or even just the sound shape preferences. Furthermore, it is highly dependent on the listening situation, which is certain to be different from the listening situation where the HCP fitted the devices. This complex, multi-dimensional dependency is hard to put into easy rules. Luckily, this is where artificial neural networks can help us.6

With the Signia Assistant, two people could sit next to each other at the same table, have the same request, and still get two different changes to their hearing aid settings. How? Well, expert knowledge is not definite. Meaning, there are established “facts” on what works best, but we can’t know for certain in every (or any) situation. Therefore, one can only create a system of what we think is the most likely best solution (change to the hearing aid). This gives a great basis, but it also means that the Signia Assistant has room to explore different solutions and learn if something else potentially works even better.

If it does not, the first—most obvious—solution will gain strength in future decision making. If, however, the alternative solution proves to be even better over time, for many wearers, it climbs the hierarchy and becomes more likely to be used by the Signia Assistant in future similar situations.

The other aspect is that the neural network of the Signia Assistant can identify that some hearing aid wearers have certain characteristics that increase the likelihood for a particular solution. Things like previous hearing aid experience, certain hearing loss configurations, or sensitivity for noise, are all example of such characteristics. Thus, one group of users with a set of common attributes will then potentially be given other changes to their hearing aid settings than a different group.

Additionally, the Signia Assistant remembers the individual’s previous preferences and uses that as an added element to weigh which solutions might work best for that individual. In this way, these two people in the same noisy restaurant will get the solution the neural network has identified as best, specifically for them.

Research Validation

A validation study of the Signia Assistant was conducted at the WS Audiology ALOHA (Audiology Lab for Optimization of Hearing Aids) in Piscataway, NJ. A total of 7 males and 8 females (average age: 61, range 33-78) individuals with bilateral sensorineural hearing loss participated. All were experienced hearing aid users. Their symmetrical downward sloping mean audiogram ranged from 30-40 dB in the low frequencies to 60 dB for 4000 Hz. As part of the recruitment process, all participants owned and were able to operate a smart phone.

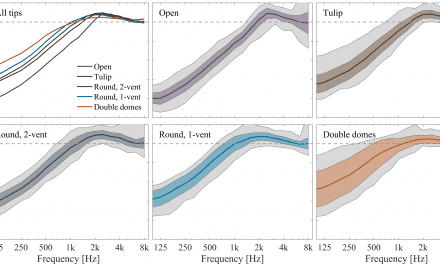

The subjects were fitted bilaterally with Signia Xperience Pure 7X RIC hearing aids using the Connexx 9.2 fitting software. Click sleeve fitting tips were selected that were appropriate for the individual’s hearing loss. The hearing aids were fitted to the NAL-NL2 prescriptive method, with the fittings verified via probe-microphone measurements. Hearing aid special features were set to default parameters. OVP was trained and activated. All settings were copied to a second program, which would later be used to verify the user changes made in the home trial.

With the participants aided bilaterally, speech recognition testing was conducted in an audiometric test suite. For recognition in quiet, the speech material was the Auditec recording of the NU-6 monosyllabic word lists presented at 55 dB SPL. A second speech recognition measure was the American English Matrix Test (AEMT). The AEMT is an adaptive sentence test used with a competing speech noise, which was presented at 65 dB SPL. SRT80 was used as the test criterion (the SNR where participants repeated 80% of the words of the sentences correctly). For both speech measures, the target speech was presented from a 0° (front) azimuth; for the AEMT, the competing speech noise was delivered from 180° (back).

Following the initial lab testing, the participants were transferred to an independent usability resources research group, where they were instructed on the use of the Signia Assistant app. After successful training on the app, the participants used the hearing aids in a 10-14-day home trial. They were instructed to use the app on demand in their daily life and were given a list of specific listening situations outside of home to experience. During the week, the participants were contacted to ensure that they were using the app, were taking part in the requested listening experiences, understood the operation, and were requested to answer the System Usability Scale by Brooke8 to rate the user friendliness of the app.

Following the home trial period, the participants returned to the ALOHA, at which time speech recognition testing was again conducted. For this visit, speech in quiet and the AEMT were administered for both the original NAL-NL2 programmed settings (stored in the 2nd program) and for the fitting that resulted from the use of the Signia Assistant (1st program “Universal”). The participants also completed a questionnaire on their experiences regarding benefit and satisfaction of Signia Assistant’s use.

Results

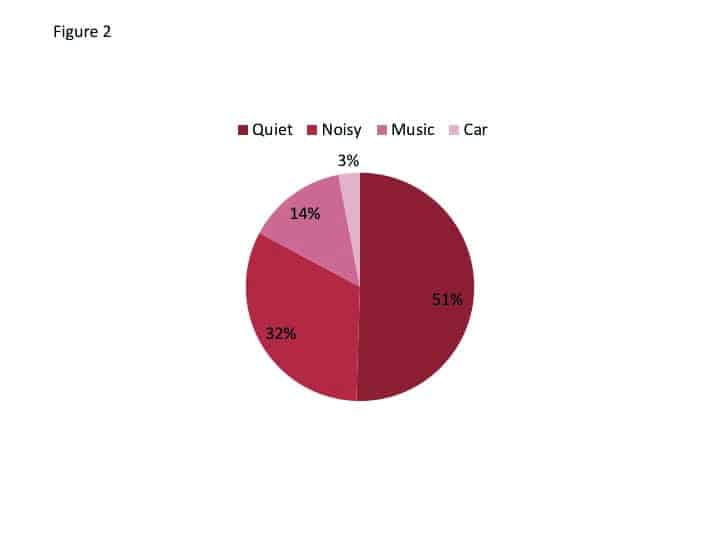

Wearing and usage data. At the time of the fitting, for research purposes, the app for each participant was coded so that use data could be retrieved at the conclusion of the study (Note: For normal use of the Signia Assistant, all data are 100% anonymous to ensure full data privacy). These data revealed that the average participant used the hearing aids for 147 hours during the trial period. The most common environment was quiet followed by noisy, listening to music, and in a car (Figure 2). During the trial period, the Assistant was used a total of 266 times, with an average of 18 times per participant. The most common reason for using the Assistant was Sound Quality (62%), followed by Other Voices (25%) and Own Voice (13%). Recall that all participants were fitted and trained with Own Voice Processing, which is designed for an improved perception of own voice, and obviously works quite well.

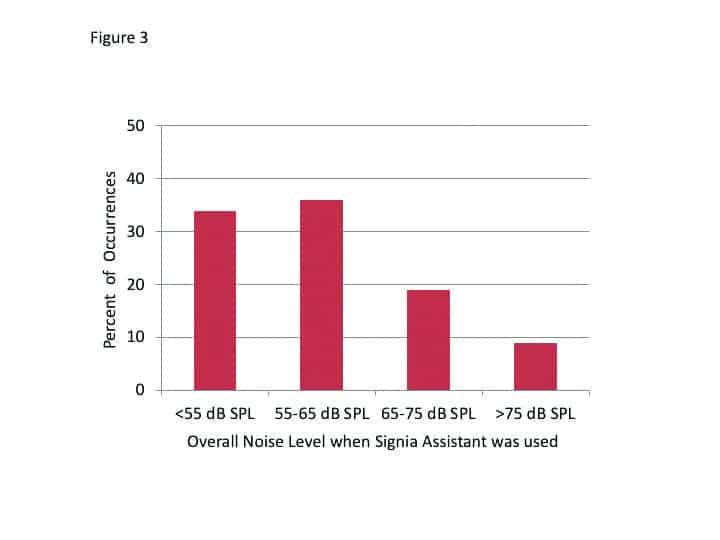

The decision-making of the Signia Assistant is driven in part by the overall noise level for the situation when the user accessed the app. The distribution of the level of the background noise that was present at the time of the 266 events is shown in Figure 3. Observe that in two-thirds of the cases, the Assistant was used when the overall SPL was 65 dB or less. This is consistent with the finding that 50% of the events were for quiet (Figure 2).

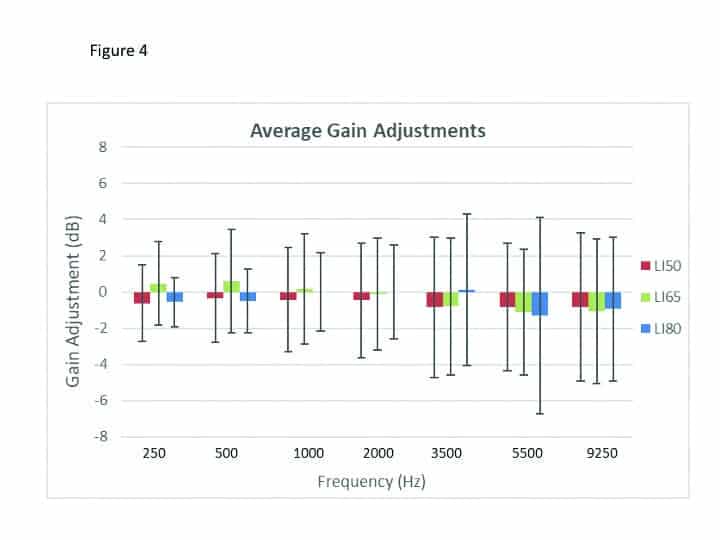

Individualization of hearing aid settings. It was of particular interest to examine what gain changes resulted from the use of the Signia Assistant. There is always some concern that following a verified fitting by an HCP, if the patient is given control, perhaps unreasonable changes will be made. This was not the case. Shown in Figure 4 are the changes in gain for 65 dB SPL input that were present at the end of the home trial (recall that all participants were originally fitted to the NAL-NL2 targets). It could be observed that the wearers preferred very different sounds and adapted their hearing aid settings in both directions, some decreased, and others increased amplification and changed to more or less compressive processing.

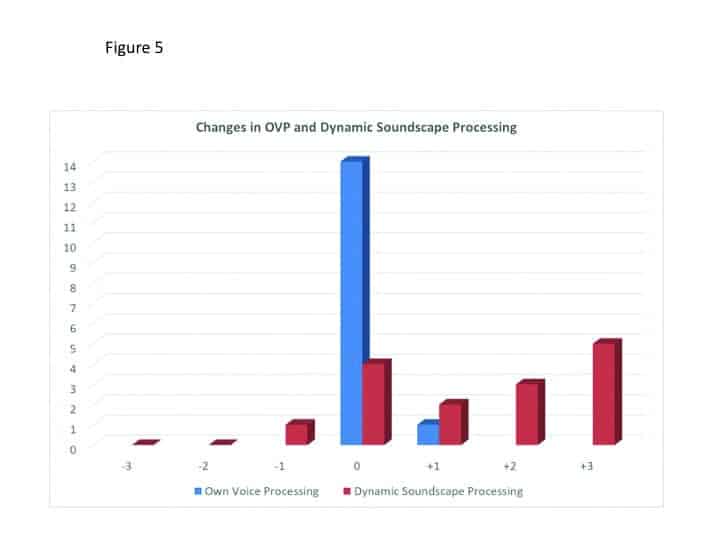

The means of the individual data revealed values that were 1-2 dB below the NAL-NL2 and resulted in a mildly changed compression scheme in the low to mid frequencies. This is consistent with findings with trainable hearing aids, when the original fitting was the NAL-NL2, as reported by Keidser et al.9 Dynamic Soundscape Processing steers the automatic behavior of noise reduction and directionality algorithms, and the preferred strength is also assumed as highly individual. This is reflected in the wide spread of the resulting settings from the Signia Assistant’s use, from slight decrease towards the default setting to a strong increase (see Figure 5).

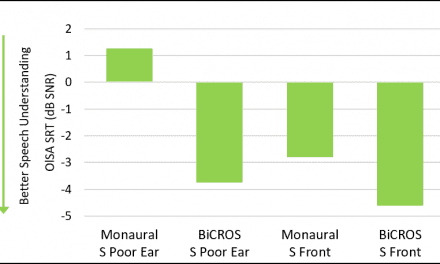

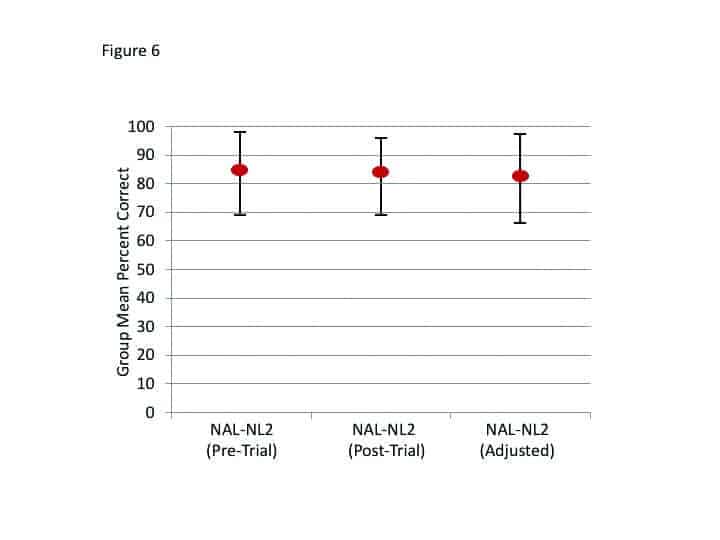

Speech recognition. Following the home trial, speech recognition was repeated for the NU-6 (in quiet) and the AEMT, for both the original NAL-NL2 fitting and the fitting that resulted from the use of the Signia Assistant. The order of testing was counterbalanced. The results for speech recognition in quiet are shown in Figure 6. Included are the findings from tests with the NAL-NL2 programming that were obtained on the day of the fitting (before the home trial). A presentation level slightly-softer-than-normal (55 dB SPL) was purposely selected so that important changes in audibility would be detected. The data revealed no significant difference among the three test conditions (p>.05). That is, this speech test suggests that the Signia Assistant sustained recognition performance.

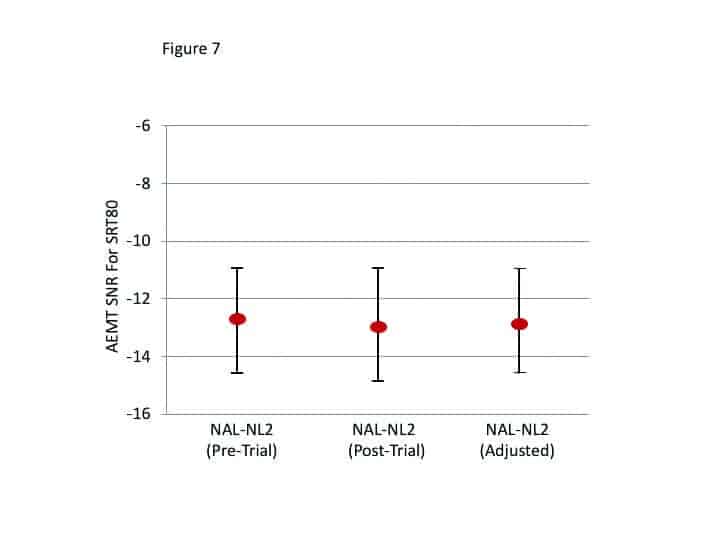

The results of the AEMT are shown in Figure 7. As with the speech-in-quiet testing, no significant differences between test conditions were observed (p>.05).

User experience, perceived benefit, and satisfaction. Regarding the user experience of the Signia Assistant rated after 7 days of use, the results were very positive. On a 5-point scale (Strongly Agree to Strongly Disagree), 80% agreed that they had confidence in the system, and 73% agreed that they would like to use it frequently, that is was not too complex, and that it was easy to use. Only 7% believed that it was too complex and difficult to use, and only 14% believed that they would need technical assistance.

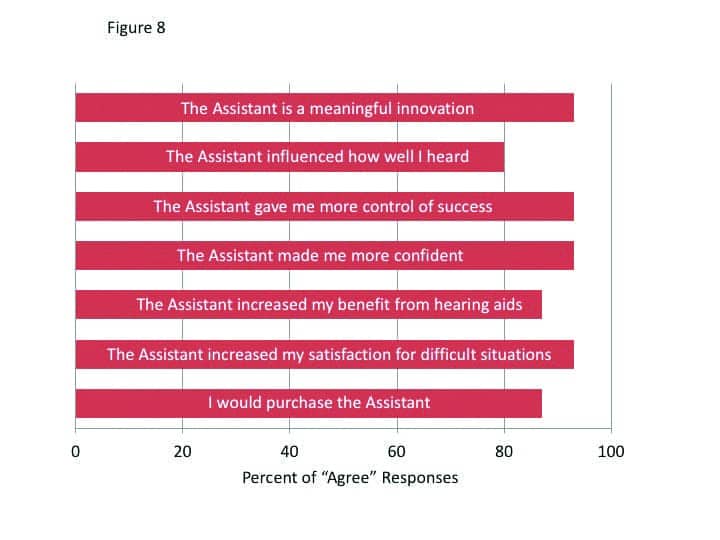

Perceived benefit and satisfaction were rated on a 7-point scale (Strongly Agree vs. Strongly Disagree, with a mid-point rating of Neutral) during the final appointment. Figure 8 displays the findings for these questions; the summed percentages of the three “agree” labels (ie, a rating of 5, 6, or 7). As shown, all are very positive findings, with many at 93% agreement, and no more than 7% fell into the “disagree” category for any item. When asked a final question—if they would recommend the Signia Assistant to a friend on a 1-10 scale (1=Strong No; 10=Strong Yes)—all participants gave a rating of at least 5, and 73% gave a rating of 8 or higher.

It is interesting to note that 80% of participants reported that the Signia Assistant improved how well they heard, and 93% stated that using the Assistant improved their satisfaction for listening in difficult situation. Recall that this was not revealed in the speech testing and goes back to our earlier discussion regarding the value of EMA. An important, but difficult listening situation for one individual might be an SNR of +2 dB, and for a another, it might be +10 dB. These findings also show that individuals can make changes that improve their hearing for a variety of situations, and yet not reduce their performance for standardized speech tests.

Summary

The results indicate that the Signia Assistant with its neural network will ensure that the end-user always gets the best possible solution for any given situation, always tailored to their specific needs and preferences. It helps make the real-world an extension of the fitting process outside of the clinical setting. It marks an important step in going from assumption-based to data-driven knowledge, moving away from a “one-size-fits-all approach” to precisely tuned hearing for each individual.

The results of this research also clearly show that the people liked and were able to handle their fine-tuning demands with the Signia Assistant. They changed their hearing aid settings in a reasonable way for improved satisfaction in difficult listening situations without compromising speech intelligibility measures.

Reducing the amount of follow up appointments is linked to higher satisfaction. This study indicates that the Signia Assistant can be a great tool for achieving this. The subjects reported increased satisfaction for how well they heard in general, but also specifically for difficult listening situations. This indicates that the Assistant provided solutions that helped them instantly in those situations hard to replicate in an office, or to describe in retrospect.

We found a high increase in confidence through empowerment among the participants as they felt more in control of their hearing success. This is also reflected in the fact that most said they would look for such an assistant in their next purchase.

This tool is also a great benefit to the hearing care professional. It acts both as an extended arm to the wearer outside the clinic, and also as a tool for insights into the real-world experience of the wearer.

References

1. Jenstad LM, Van Tasell DJ, Ewert C. Hearing aid troubleshooting based on patients’ descriptions. J Am Acad Audiol. 2003;14(7):347-360.

2. Kochkin S, Beck DL, Christensen LA, et al. MarkeTrak VIII: The impact of the hearing healthcare professional on hearing aid user success. Hearing Review. 2010;17(4):12-34.

3. Froehlich M, Branda E, Apel D. Signia TeleCare facilitates improvements in hearing aid fitting outcomes. AudiologyOnline. Published January 4, 2019.

4. Jorgensen L, Van Gerpen T, Powers TA, and Apel D. Benefit of using telecare for dementia patients with hearing loss and their caregivers. Hearing Review. 2019;26(6):22-25.

5. Thielemans T, Pans D, Chenault M, Anteunis L. Hearing aid fine-tuning based on Dutch descriptions. Int J Audiol. 2017;56(7):507-515.

6. Høydal EH, Aubreville M. Signia. Signia Assistant Backgrounder. https://www.signia-library.com/wp-content/uploads/sites/137/2020/05/Signia-Assistant_Backgrounder.pdf.

7. Wolf V. How to use Signia Assistant.

8. Brooke J. SUS–A quick and dirty usability scale. In: Jordan PW, Thomas B, Weerdmeester BA, McClelland IL, eds. Usability Evaluation in Industry. Taylor and Francis;1996.

9. Keidser G, Alamudi K. Real-life efficacy and reliability of training a hearing aid. Ear Hear. 2013;34(5):619- 629.

Correspondence to Dr Branda at: [email protected].

Original citation for this article: Hoydal EH, Fisher R-L, Wolf V, Branda E, Aubreville M. Empowering the wearer: AI-based Signia Assistant allows individualized hearing care. Hearing Review. 2020;27(7):22-26.